Share

This article initially appeared in The State of Open Humanitarian Data 2026.

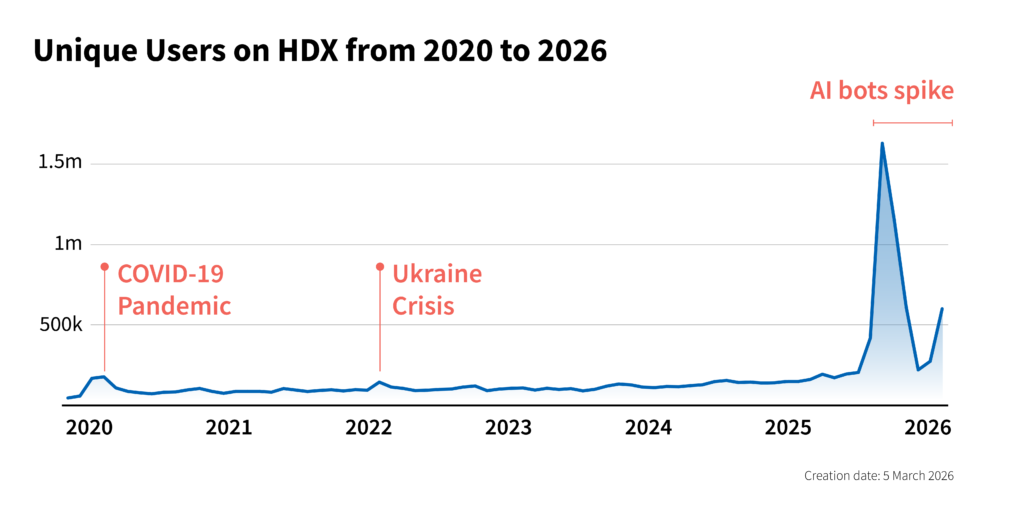

Although our annual report assesses the availability of data, we also want to understand its use, which is primarily measured through Internet traffic to websites such as HDX. Over the last year, the rise in AI bots – software applications that perform automated tasks – has created new challenges for accurately assessing data use.

Global web traffic has steadily increased over time, but the past year saw unprecedented spikes driven largely by a surge in automated activity. This includes verified and unverified bots and crawlers that are used to train models, retrieve content and perform user-directed tasks. While overall Internet traffic grew by 19 percent globally in 2025, AI-bot and crawler traffic almost quadrupled, climbing from 2.6 percent of verified bot requests in January to more than 10.1 percent by the end of the third quarter.

According to web analysts, this spike was primarily fueled by the aggressive rollout of Large Language Models (LLMs) from global tech giants, such as OpenAI’s ChatGPT, Anthropic’s Claude and Google’s Gemini, among others. To support these launches, companies deployed bots to ‘crawl’ the live web for fresh training data and real-time response generation. Over 25 percent of these bots are attributed to Google alone, signalling what some experts have called a ‘bot war’ between the various tech companies and content owners for fresh content. Models released late in the year introduced autonomous web navigation (where the bots navigate the web without human oversight), enabling them to execute multi-step tasks across the Internet on behalf of users. This makes them harder to distinguish from humans, skewing web analytics and masking real user behavior.

The impact of this bot activity was not distributed equally across the digital landscape. For the first time, civil society and nonprofits surpassed financial institutions as the most targeted sector for cyberattacks. Ultimately, it appears that organizations categorized under ‘People and Society’ (including humanitarian organizations) bore the brunt of AI bot activity throughout 2025.

How this shows up on HDX

How this shows up on HDX

HDX was no exception to these trends. In alignment with global Internet traffic, which spiked in August 2025, traffic to HDX rose throughout 2025 and accelerated into a significant peak in the second half of the year (see chart above). HDX managed periodic surges in server demand that reached 20–30 times baseline levels. Notably, even after rigorous security filtering, the platform maintained a four times year-over-year increase in unique pageviews compared to 2024.

The HDX team was forced to manage this unprecedented volume of requests without immediate clarity on which traffic was legitimate. To protect the user experience and maintain site stability, we implemented web application firewall protections. This included blocking requests from suspicious IP addresses (known to be malicious), plus bot-control and managed rule groups, rate-based rules for request throttling, geolocation filtering, and other custom rules to block or challenge suspicious traffic.

Distinguishing between human users and automated agents has become more of an art than a science. Currently, HDX categorizes traffic into three primary tiers:

- Wanted bots: This includes traditional search engine crawlers and programmatic users, as well as new AI-driven traffic identified by user-agent strings indicating indexing, training, or retrieval purposes.

- Unwanted bots: High-volume traffic associated with bots attempting to take down the site, aggressive scrapers, and security probes.

- The ‘grey zone’: Traffic that lacks identifying characteristics, providing no fingerprinting data or user-agent strings to verify intent.

Unique challenges for Open Data platforms

Unique challenges for Open Data platforms

The traffic surges at HDX reflect a profound shift in our digital ecosystem, creating a new tension in our goal to provide humanitarian data that is easy to find and use. As the demand for high-quality training data grows, our commitment to open access has created an unexpected technical burden.

Machine learning algorithms are only as effective as the data they are trained on. In a digital landscape increasingly saturated with low-value memes and synthetic articles, curated humanitarian data represents a gold mine for LLM training and retraining. Thus, platforms like HDX have become primary targets for aggressive AI crawlers seeking structured, high-fidelity information.

Much of the world’s valuable information remains locked behind paywalls, proprietary systems, or private Intranets. In contrast, HDX follows the fundamental principle of Open Data: that data can be freely used, re-used and redistributed. While all data on HDX is shared under a license set by the contributor, HDX prioritizes public accessibility and a streamlined user experience, intentionally removing roadblocks, reducing login requirements and providing rapid download options to empower humanitarian users.

The very features designed to facilitate human impact have made HDX and other open platforms a prime target for high-frequency bot crawls. This surge in automated traffic now threatens the stability of these platforms, creating a digital paradox where the effort to keep data open risks obstructing the legitimate users we are most committed to serving.

Navigating a bot-fueled Internet

Rising automated traffic requires a more nimble approach to identifying, parsing and responding to various bot behaviors. The first step is recognizing that not all automated traffic is detrimental. We need to distinguish between types of bot traffic and interact successfully with each type.

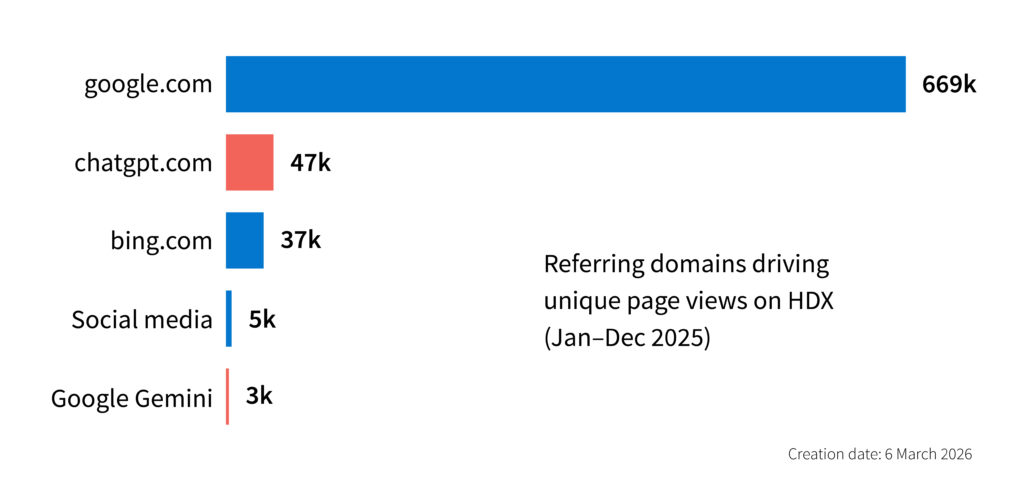

Search behaviors are shifting towards AI platforms like ChatGPT, Claude and Gemini, with chatbots becoming a dominant gateway to information. As such, it is important that humanitarian data appears in AI-generated results, as it has with traditional search engines. It is equally critical that AI platforms relay humanitarian data accurately, rather than contributing to misinformation. This will mean trusted answers can be found by those who need them – the central goal of HDX.

Managing bot identification and platform safety will require a strategic reallocation of resources towards infrastructure and specialized skillsets. This will ensure our platforms remain human-first but become AI-ready. Many of our data partners are also facing this challenge and we will need to navigate it together.

What has become evident is that traditional ‘vanity’ metrics like unique visitors and monthly active users are no longer sufficient in an era of programmatic bot traffic. The HDX team is experimenting with cohort-based tracking that follows specific user segments defined through behavioral events, e.g., users who search and then download a dataset. We will share more about this as we make progress. Please be in touch at centrehumdata@un.org to share your experiences and ideas.